Medium

1w

102

Image Credit: Medium

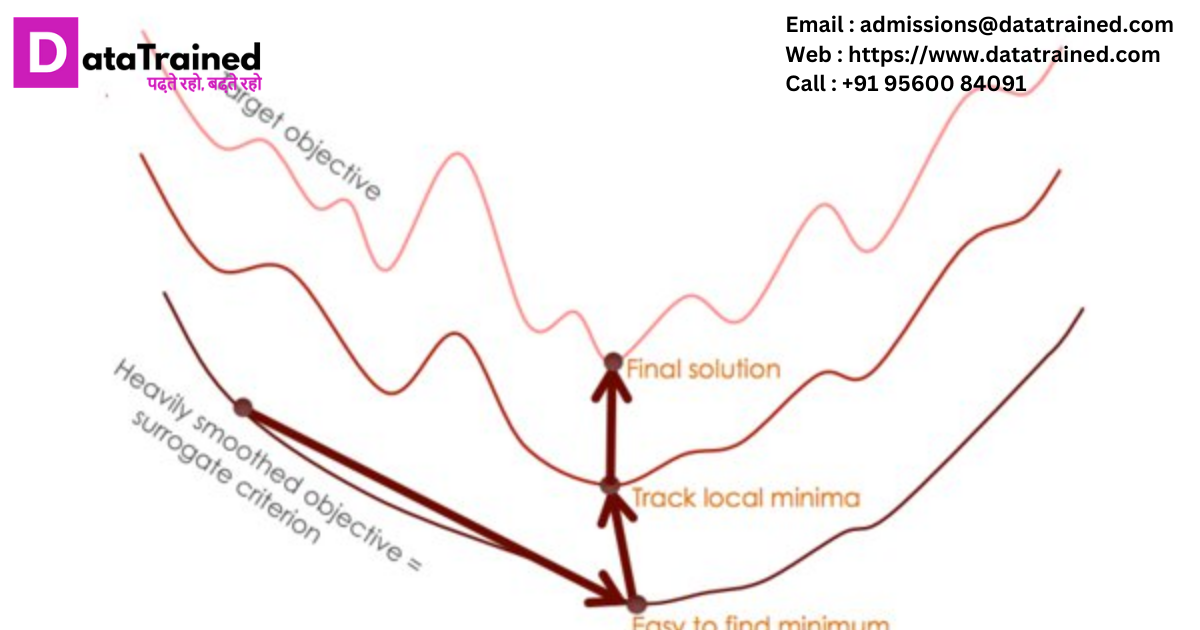

Asymmetric Certified Robustness via Feature-Convex Neural Networks

- Researchers are working on developing robust neural networks that can withstand adversarial attacks.

- One approach is the use of feature-convex neural networks, which offer asymmetric certified robustness.

- Feature-convex networks have a convex decision boundary in the feature space, making them inherently robust to adversarial perturbations.

- Experimental results show that feature-convex networks outperform traditional methods in terms of robustness and maintain high accuracy.

Read Full Article

6 Likes

For uninterrupted reading, download the app