Medium

2w

329

Image Credit: Medium

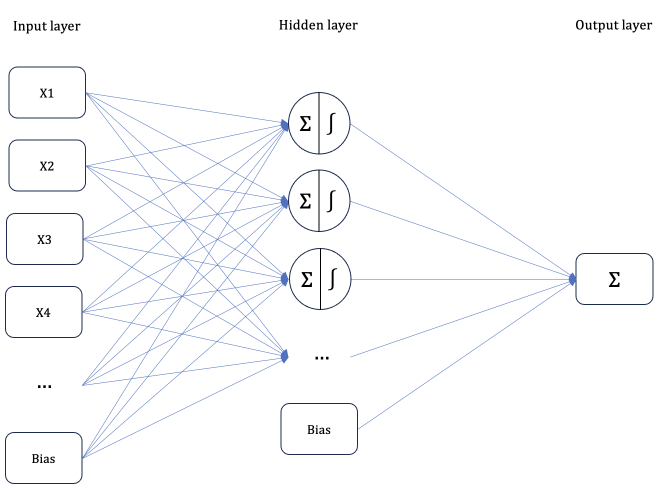

Feature importance in Neural Network

- The importance of features in a neural network is determined by how much the output changes when a feature is changed by 1 unit.

- The process of calculating feature importance is similar to how parameters are adjusted in a neural network using partial derivatives and the chain rule.

- To compute feature importance, all possible routes from the input to the output are considered, and the weights and derivatives of activation functions along these routes are multiplied.

- The resulting multiplications for each input are summed to determine the overall feature importance.

Read Full Article

19 Likes

For uninterrupted reading, download the app