Medium

1w

161

Image Credit: Medium

From Words to Wisdom

- Text data is unstructured and requires specialized techniques in data science to extract useful information.

- Text preprocessing is a critical step in making raw text data ready for analysis.

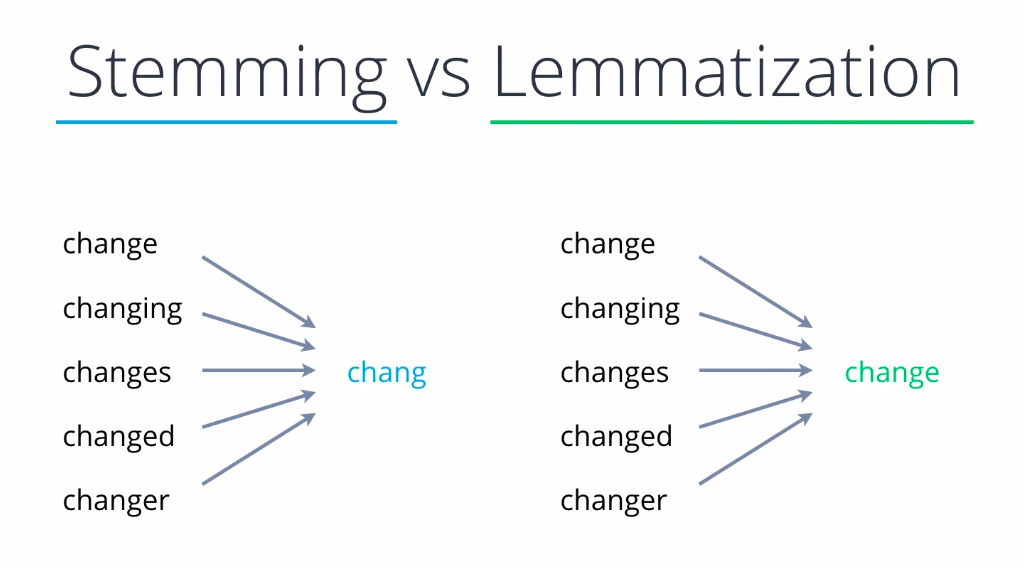

- Stemming algorithms and lemmatization are two methods of text preprocessing, with lemmatization being more precise.

- The Bag of Words (BoW) model and Term Frequency-Inverse Document Frequency (TF-IDF) are foundational techniques used in text analysis and natural language processing.

- BoW treats text as a mere collection of words, ignoring the grammar and the order in which words appear.

- TF-IDF is a statistical measure used to evaluate how important a word is to a document in a collection or corpus.

- BoW and TF-IDF have limitations in handling synonyms and polysemy.

- In handling large amounts of text data, BoW can result in high-dimensional and sparse data.

- Without additional processing like stop-word removal or term weighting, BoW models may be biased towards frequent, less informative words.

- Service industry companies like hotels or airlines collect vast amounts of text data to enhance customer satisfaction, improve services, and tailor marketing strategies.

Read Full Article

9 Likes

For uninterrupted reading, download the app