Towards Data Science

1w

140

Image Credit: Towards Data Science

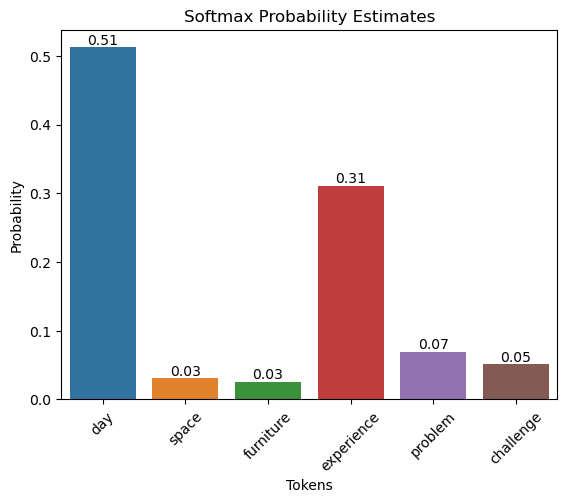

How does temperature impact next token prediction in LLMs?

- The temperature parameter in LLMs affects the next token prediction.

- At a temperature of 1, the probabilities are the same as the standard softmax function.

- Increasing the temperature broadens the range of potential candidates for next token prediction.

- Decreasing the temperature boosts the model's confidence and reduces uncertainty.

Read Full Article

8 Likes

For uninterrupted reading, download the app