Mit

4w

57

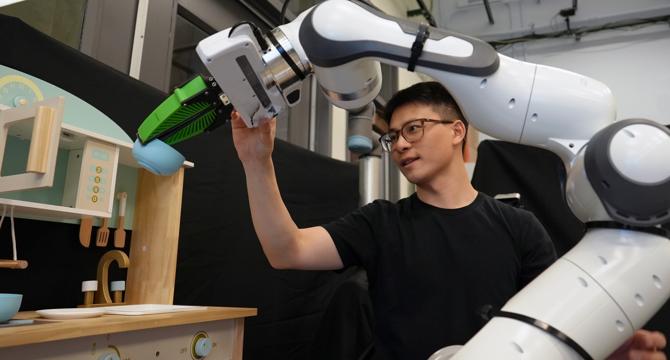

Image Credit: Mit

Robotic helper making mistakes? Just nudge it in the right direction

- MIT and NVIDIA researchers have developed a new framework for correcting robot behavior through simple interactions, such as pointing or nudging the robot in the right direction.

- This method allows users to provide real-time human feedback to guide the robot without the need for retraining the machine-learning model.

- The framework performed 21 percent better than alternative methods that did not incorporate human interventions.

- It enables users to guide factory-trained robots in household tasks without the robot having prior knowledge of the environment or objects.

- The method was developed by MIT researchers including Felix Yanwei Wang, with support from NVIDIA colleagues.

- Their approach allows users to correct robot misalignment by pointing at the object, tracing a trajectory, or physically adjusting the robot's arm.

- The framework uses a specific sampling procedure to ensure that the robot selects actions aligned with the user's goal.

- By incorporating user interactions, the robot can improve its behavior through immediate corrections and continuous learning.

- The researchers aim to enhance the speed of the sampling procedure and explore policy generation in new environments in future research.

Read Full Article

3 Likes

For uninterrupted reading, download the app