Amazon

1d

98

Image Credit: Amazon

Using Large Language Models on Amazon Bedrock for multi-step task execution

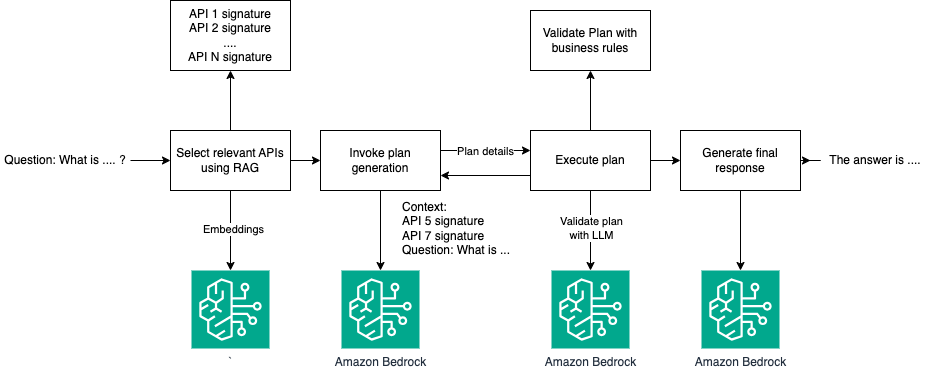

- Large Language Models (LLMs) can be used for tasks requiring multi-step dynamic reasoning and execution, which traditionally required expertise from business intelligence specialists and data engineers.

- LLMs can break down complex tasks into steps, utilize tools beyond text-based responses, and offer accurate, context-aware outputs using external capabilities or APIs.

- An example showcased in the post is a patient record retrieval solution built on APIs, emphasizing the multi-step reasoning and execution process.

- The solution utilizes a Synthetic Patient Generation dataset for analytical queries and can be set up easily using provided steps.

- The solution involves planning and execution stages, where the LLM formulates a plan using predefined API function signatures and executes it programmatically to produce the final output.

- Structured JSON representations are utilized to facilitate clear plans for the LLM, ensuring accurate results through a series of data retrieval and transformation functions.

- Error handling mechanisms in the execution stage enhance reliability by detecting and addressing anomalies, thus improving the overall user experience.

- This application of LLMs in complex analytical queries, exemplified through the Amazon Bedrock framework, showcases the potential for revolutionizing business decision-making processes.

- The authors, Bruno Klein, Rushabh Lokhande, and Mohammad Arbabshirani, contribute their expertise in machine learning, data engineering, and data science to highlight the efficacy of LLMs in facilitating data-driven solutions.

- The article underscores the role of LLMs in expanding functionality to deliver actionable outputs and enhance business analytics and decision-making workflows.

Read Full Article

5 Likes

For uninterrupted reading, download the app