Deep Learning News

Medium

0

Image Credit: Medium

The Magic Behind Recognizing a Scribble: How Neural Networks Learn

- Neural networks revolutionize artificial intelligence and machine learning by learning from data rather than predefined rules.

- Neural networks consist of interconnected neurons organized into layers to process and transmit information.

- In digit recognition, the input layer represents pixels, the hidden layers assist in learning complex patterns, and the output layer gives the network's prediction.

- Weights, connections, and biases control the influence between neurons in different layers of the network.

- Neurons in hidden layers specialize in recognizing features like edges, curves, or structural components, leading to accurate classification.

- Training neural networks involves feeding them labeled datasets to refine weights and biases for accurate predictions.

- The network learns to recognize complex patterns by tuning millions of tiny knobs, gradually improving its predictions.

- Neural networks go beyond digit recognition, powering various applications like image classification, natural language processing, and more.

- Their success relies on large labeled datasets that enable networks to learn specific patterns for each task.

- Neural networks leverage interconnected neurons, layers, weights, biases, and activation functions to process input data and make predictions.

Read Full Article

Like

Medium

298

AI in Gaming: How It’s Changing the Industry

- AI is transforming the gaming industry with various applications.

- AI generates unique worlds and landscapes in games like No Man's Sky.

- AI-powered bots playtest games, detecting bugs faster than human testers.

- AI personalizes player experiences by adapting to their playstyle in games like Red Dead Redemption 2.

Read Full Article

17 Likes

Medium

279

Image Credit: Medium

Explainable AI: Why We Need to Understand How AI Makes Decisions

- Explainable AI (XAI) is about building trust and understanding complex algorithms.

- XAI aims to make AI's decision-making process transparent.

- Transparency enhances the system's performance and allows developers to rectify issues efficiently.

- Hidden biases in AI decision-making processes have caused problems.

Read Full Article

16 Likes

Medium

238

Image Credit: Medium

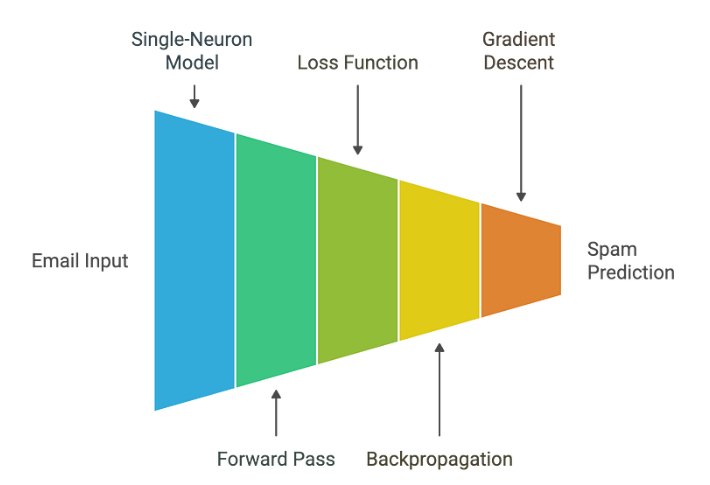

Inside AI #4: How Neural Networks Learn — Backpropagation and Gradient Descent Explained

- Neural networks learn through the process of backpropagation and gradient descent.

- The learning process involves making predictions, measuring the error, determining which parameters caused the error, and making small adjustments in the right direction.

- The weights and biases of the neuron are updated using the gradient descent algorithm, gradually improving the model's accuracy over time.

- By repeating this process with multiple examples, the model can learn to make more accurate predictions.

Read Full Article

14 Likes

Towards Data Science

156

Adding Training Noise To Improve Detections In Transformers

- Modern vision transformers are utilizing noise addition to enhance the performance of object detection tasks.

- Early vision transformers like DETR used learned decoder queries for object detection, but had slow convergence.

- Recent transformer architectures have implemented deformable aggregation and spatial anchors for improved detection results.

- The Hungarian algorithm is used for prediction to ground truth matching in transformers, leading to unstable training objectives.

- DN-DETR addresses the unstable matching issue by introducing noise to ground truth boxes, improving model stability and convergence speed.

- DINO enhances denoising by incorporating contrastive learning, improving detection performance even further.

- Temporal models like Sparse4Dv3 leverage denoising and temporal denoising groups for object tracking across frames.

- Denoising in vision transformers accelerates convergence and boosts detection results, especially with learnable anchors.

- The use of denoising raises questions about the necessity of learnable anchors and the impact on models with non-learnable anchors.

- While denoising improves stability in gradient descent, the relevance in models with spatially constrained queries remains a topic for further exploration.

Read Full Article

10 Likes

Medium

202

The current rate of Jiocoin (JIO) varies depending on the source and token version:

- The Jiocoin token price is currently near zero on some platforms, while Reliance’s Jio Coin trades around ₹20-21 per token with limited market activity.

- Users can earn Jio Coins by using the JioSphere browser and other Jio apps.

- Jiocoin is not a traditional cryptocurrency available on exchanges, and it is only obtainable by earning through Jio’s ecosystem.

- JioSphere is unique because it lets users earn Jio Coins simply by engaging with the browser, browsing, watching content, or using built-in features.

Read Full Article

12 Likes

Medium

76

Image Credit: Medium

Can we B.A.N Backdoor Attacks in Neural Networks?

- Deep Neural Networks (DNNs) are vulnerable to backdoor attacks, where an attacker can tamper with the model to behave maliciously on specific inputs.

- Backdoor attacks can be injected during training or by altering the weights and biases of the neural network.

- Outsourcing training through Machine Learning as a Service (MLaaS) can introduce security risks, including receiving backdoored models.

- Backdoored neural networks perform well on regular inputs but exhibit misclassifications based on hidden triggers, posing risks in applications like autonomous driving.

- Methods like Neural Cleanse (NC) and FeatureRE have been developed to detect and reverse backdoors in neural networks.

- Recent advancements like BTIDBF and BAN aim to address feature space backdoor attacks by efficiently detecting triggers and utilizing adversarial noise.

- BAN approach involves generating adversarial neuron noise and masked feature maps to identify and differentiate between benign and backdoored neurons.

- BAN has shown efficiency and scalability in identifying backdoors in neural networks, achieving an average accuracy of about 97.22% across different architectures.

- Given the challenge of detecting backdoors that do not significantly impact model performance, continuous monitoring and research in this area are crucial for securing deep neural networks.

- Research and advancements in the field of neural network security, especially in combating backdoor attacks, are essential for maintaining the integrity of machine learning systems.

Read Full Article

4 Likes

Medium

261

Image Credit: Medium

Week 17 Update: Building Kolmogorov-Machine for Neural Network Analysis

- This week's focus was on developing the Kolmogorov-Machine module for analyzing neural network distributions.

- Transformew2 was successfully integrated as a git submodule to improve repository organization.

- Extensive educational content was added on layer normalization, neural network fundamentals, and specialized neurons.

- Challenges include managing repository complexity, cross-framework compatibility, and incomplete code examples.

Read Full Article

15 Likes

Hackernoon

121

Image Credit: Hackernoon

Tired of Slow Python ML Pipelines? Try Purem

- Purem is a high-performance AI/ML computation engine that aims to provide native speed to Python code, offering 100–500x acceleration for real-world ML operations.

- Unlike traditional Python accelerators, Purem focuses on optimizing core operations at a pure binary level, eliminating overhead and serialization issues.

- It bridges the Python-hardware performance gap by precompiling core operations for x86-64 and enabling zero Python overhead data flow.

- Purem ensures easy deployment with pip installation, compatibility with Python 3.7+, and production-ready features such as test coverage and logging.

- Benchmark comparisons show significant speedups of Purem over NumPy, JAX, and PyTorch, ranging from 100x to 500x on core operations.

- Current ML libraries like JAX and PyTorch face limitations on CPUs, while NumPy and Pandas struggle with scalability and single-threaded performance.

- Purem unlocks use cases in fintech, edge AI, big data, and ML research by providing rapid computation without infra rewrites or performance compromises.

- Example-driven and performance-focused, Purem sets a new standard for Python-native performance in AI/ML applications.

- It is positioned as SLA-grade and production-ready, aiming to enhance the productivity and performance of teams working at real scale.

- Purem encourages the next generation of AI engineering by offering accelerated computation without sacrificing Python’s elegance and productivity.

Read Full Article

7 Likes

Medium

58

Image Credit: Medium

How AI Will Change Everyday Life by 2030

- By 2030, every student could have their own AI-powered tutor, bringing personalized and effective education.

- Doctors will work side-by-side with AI to diagnose diseases earlier and more accurately, leading to longer lives and lower medical bills.

- AI will make homes and cities feel almost magical, with automated adjustments of lights, temperature, music, and even AI-driven public transport.

- Routine jobs will be automated, leading to the rise of new creative and human-focused roles.

Read Full Article

3 Likes

Medium

433

Image Credit: Medium

Decoding Life One Sequence at a Time: A Practitioner’s Dive into Protein Prediction

- Predicting and modeling protein sequences efficiently is a challenge in biotechnology and medicine.

- Using techniques inspired by natural language processing, researchers apply sequence modeling to protein sequences.

- A simple LSTM-based language model trained on short protein chains shows the ability of small models to learn biochemical patterns and generate plausible protein sequences.

- Next-token prediction frameworks provide a practical and scalable approach for protein modeling, useful in protein design, mutation prediction, and therapeutic innovation.

Read Full Article

26 Likes

Medium

956

Image Credit: Medium

The Future of AI Coding: How to Boost Productivity with GitHub Copilot and ChatGPT

- GitHub Copilot and ChatGPT are AI coding tools that aim to boost productivity.

- GitHub Copilot is a code completion tool that suggests code in real time, providing speed, learning, and collaboration benefits.

- ChatGPT is a conversational AI model that helps with code generation, debugging assistance, and documentation and learning.

- The future of AI coding includes personalized assistants, seamless integration, and ethical considerations.

Read Full Article

25 Likes

Medium

114

Image Credit: Medium

How AI Powers Voice Assistants Like Alexa and Siri

- Voice assistants like Alexa and Siri are powered by AI.

- The process of voice assistants includes Automatic Speech Recognition (ASR), Natural Language Understanding (NLU), Natural Language Generation (NLG), and Text-to-Speech (TTS).

- Voice assistants learn from user interactions and improve over time using machine learning.

- The future of voice AI holds the potential for more personal, responsive, and empathetic voice assistants.

Read Full Article

6 Likes

Medium

320

Image Credit: Medium

Gender Classification from Voice: A Deep Learning Approach with CNN and Mel Spectrograms

- This project focused on developing a deep learning system to classify gender from voice samples using Convolutional Neural Networks (CNN) and mel spectrograms.

- By interpreting mel spectrograms, the CNN was able to identify differences in how male and female voices behave in frequency and time.

- The project aimed to construct a robust gender classification model through data preparation, feature extraction, CNN training, and performance evaluation.

- Challenges were encountered when working with real-world audio data, emphasizing the complexities of deep learning models.

- Two datasets were utilized, and various audio augmentations were applied during training for improved model generalization.

- Principal Component Analysis (PCA) was considered but found to be unsuitable for audio classification tasks using CNNs due to its limitations.

- CNNs trained on spectrograms learn task-specific features focusing on time and frequency relationships critical in speech data analysis.

- Spectrograms offer visual interpretability compared to abstract PCA components, aiding in understanding pitch, formants, and energy in the signal.

- Instead of PCA, the CNN directly learned from high-resolution spectrograms, while applying regularization techniques to mitigate overfitting.

- This study highlighted that gender classification from voice involves nuanced patterns beyond pitch, effectively tackled by modern deep learning techniques.

- The system achieved over 93% accuracy and demonstrated reliable performance on real-world audio data, offering potential for further exploration in voice analysis.

Read Full Article

19 Likes

Medium

158

Image Credit: Medium

AI-Generated Tactile Textures Revolutionizing 3D Printing

- MIT's TactStyle system combines AI and 3D printing to create tactile textures that accurately mimic real materials.

- TactStyle's breakthrough allows 3D-printed objects to have both visual and tactile properties, making them truly tangible.

- The fusion of AI and 3D printing is revolutionizing how we experience objects, bringing digital designs to life.

- TactStyle's technology opens up new possibilities for creating textured surfaces in various industries.

Read Full Article

9 Likes

For uninterrupted reading, download the app