Deep Learning News

Medium

366

Image Credit: Medium

How LLMs Learn: The Pre-Training Phase Explained

- Large language models (LLMs) learn during the pre-training phase by being fed a huge amount of text to understand language rules and context.

- Common Crawl provides data from 250 billion web pages for pre-training, but preprocessing to remove noise is crucial.

- Tokenization breaks text into manageable tokens for numerical processing, with methods like Byte Pair Encoding (BPE) being common.

- Models like GPT-4o use subword-based tokenization to handle large vocabularies more efficiently.

- Training involves Next Token Prediction and Masked Language Modeling to learn language structure and relationships between tokens.

- Base models learn to generate text one token at a time and serve as a starting point for further fine-tuning.

- Base models can memorize text patterns but may struggle with reasoning tasks due to limited structured understanding.

- In-context memory allows base models to adjust responses based on the provided context, demonstrating versatility without fine-tuning.

- Base models excel in replicating text based on memorized patterns but may lack originality and deep reasoning abilities.

- In the pre-training phase, LLMs develop foundational skills by learning from raw data before advanced techniques are applied for post-training.

Read Full Article

22 Likes

Medium

259

Image Credit: Medium

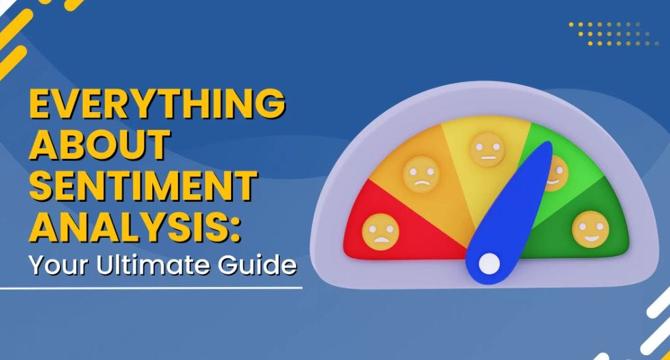

Understanding Sentiment Analysis: A Simple Guide

- Sentiment analysis involves analyzing text to determine whether it conveys a positive, negative, or neutral sentiment.

- There are three main approaches to sentiment analysis: rule-based systems, traditional machine learning, and deep learning.

- Rule-based systems follow predefined rules and word lists to identify sentiment based on certain keywords.

- Machine learning algorithms learn from data and identify sentiment based on patterns and examples.

Read Full Article

15 Likes

Medium

89

Gpt Top 5 Rankings along with use

- GPT-4: A multimodal AI model that processes text and images, with a 40% accuracy improvement over GPT-3.5.

- ChatGPT: A chatbot that offers real-time web browsing and integrations, ideal for brainstorming and drafting.

- Gemini Advanced: Google's AI model integrated with Google Workspace, excelling in data analysis and multilingual tasks.

- Claude 2: An AI model suitable for legal contracts and academic papers, prioritizing safety and ethics.

- LLaMA 2: A customizable, open-source model for developers, beneficial in niche applications.

Read Full Article

5 Likes

Medium

317

Image Credit: Medium

AI in Education 2025: Transform Learning with Personalized AI Teaching Tools & Adaptive Technology

- Artificial Intelligence in education is at the forefront of revolutionizing how students learn and engage with content.

- AI offers customized learning experiences that cater to individual needs and preferences.

- In 2025, AI in education is expected to provide personalized teaching tools and adaptive technology.

- AI platforms like DreamBox act as personal tutors, guiding students through their educational journey.

Read Full Article

19 Likes

Medium

125

Image Credit: Medium

Exploring Alternative Mobile Phones: A Guide for Non-Mainstream Users

- Non-mainstream mobile phones hold a promising future filled with numerous possibilities.

- These devices will utilize advanced technologies including flexible screens and AI interfaces to improve user experience.

- Businesses can find abundant opportunities through offering customized designs and unique aesthetics that meet unmet consumer needs while distinguishing their products from the competition.

- Non-mainstream mobile phones need to adopt innovative solutions to tackle their specific challenges in order to establish a clear market segment.

Read Full Article

7 Likes

Medium

93

Image Credit: Medium

Evolution of Computer Vision Trends You Need To Know

- Computer vision trends leading to 2025 involve advancements in Vision Transformers, Edge AI, Generative AI, 3D computer vision, Ethical AI, Explainable AI, AGVs, Multi-Modal AI, deepfake detection, and Self-Supervised Learning.

- Vision Transformers (ViTs) have been revolutionary, surpassing CNNs with self-attention, enabling processing of entire images, improving object classification, and segmentation.

- Edge AI facilitates real-time processing on devices like smart cameras and drones, reducing latency, improving privacy, and enhancing efficiency for applications like self-driving cars and security systems.

- Generative AI generates synthetic data for training models, automates labeling, and drives advancements in healthcare, entertainment, and research by creating realistic outputs from various data sources.

- 3D computer vision enhances depth perception and object recognition using technologies like LiDAR and depth sensors, crucial for industries like robotics, self-driving cars, and digital twins.

- Ethical AI addresses bias issues in computer vision, focusing on fairness, transparency, and data privacy to ensure AI systems are less biased and more equitable.

- Explainable AI (XAI) increases transparency in AI decision-making, providing logical explanations for actions, crucial in industries like health services, financial activities, and security.

- AGVs automate logistic operations in warehouses and factories, using onboard computers and sensors to enhance speed, accuracy, and utility with advanced vision technology.

- Multi-Modal AI combines computer vision with text, speech, and other data, improving systems' intelligence and context-awareness, leading to applications like visual question answering.

- Computer vision plays a critical role in detecting deepfakes to combat the spread of misinformation and propaganda by identifying alterations in images.

- Self-supervised learning advances AI models like Vision Transformers, reducing human bias and powering real-time applications in robotics, surveillance, and healthcare.

Read Full Article

5 Likes

Medium

198

Image Credit: Medium

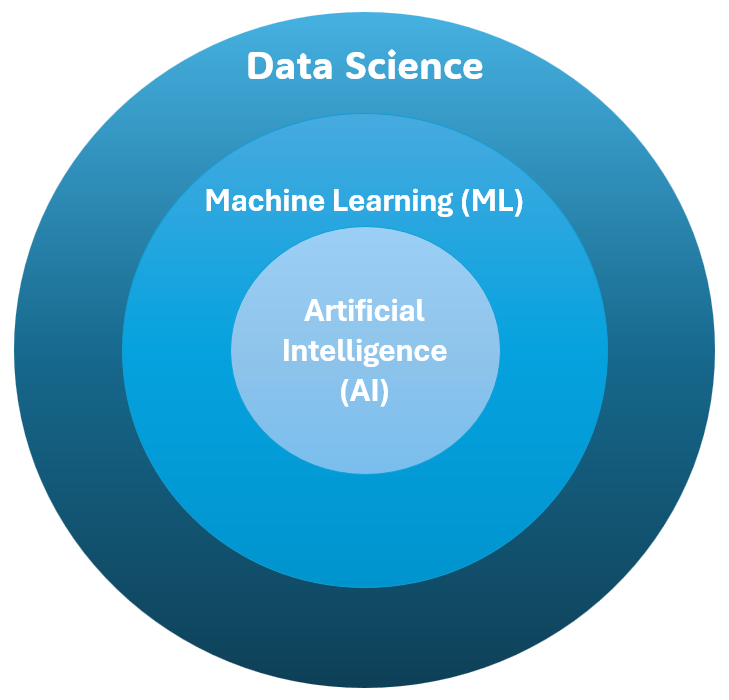

Data Science Or Artificial Intelligence: What Comes First?

- At the heart of every AI system lies Data Science, which focuses on collecting, processing, and analysing data to extract meaningful insights.

- Machine Learning (ML) is a part of Data Science. ML algorithms use structured data to learn patterns, make predictions, and automate decision-making processes.

- AI goes beyond pattern recognition and prediction, aiming to mimic human intelligence through advanced techniques such as Deep Learning, Neural Networks, and NLP.

- While AI relies on Data Science, it also enhances and automates many Data Science workflows, improving data cleaning, model selection, and insight generation.

Read Full Article

11 Likes

Medium

165

Image Credit: Medium

Neural Networks for Stock Prediction: How LSTMs & GNNs Transform Investment Strategy

- Neural networks are transforming stock prediction strategies through advanced technology.

- Investors are utilizing deep learning models to analyze stock trends for more accurate predictions and improved market timing.

- The application of LSTM and GNN models are gaining traction in stock prediction.

- These advancements offer investors a smarter way to navigate the financial markets.

Read Full Article

9 Likes

Medium

143

Image Credit: Medium

Neural Networks in Image Recognition: How AI Sees and Understands Visual Data

- Neural networks are revolutionizing image recognition and expanding possibilities.

- An individual reflects on their experience with image recognition in a gallery and ponders if machines can perceive art like humans.

- The story narrates how digital neurons mimic the intricacies of the human brain, leading to transformative discoveries.

- The article explores the magic of machine vision.

Read Full Article

8 Likes

Medium

187

Image Credit: Medium

Building an AI Powered Resume Analyzer — Phase 01 Implementation

- An AI-powered Resume Analyzer is being developed to streamline resume analysis process efficiently.

- Phase 01 implementation highlights the development of a Full Stack Tool with AI integration using openAI GenAI and LLM.

- Key features released in this phase include Smart AI dashboard, resume parsing with AI-powered NLP, real-time AI guide for improvements, and skills extraction.

- The system is designed to offer resume recommendations, improvements, and data visualizations, enhancing recruitment processes.

- Tech stack includes React TS, Vite, Tailwind CSS for the frontend, Python, FastAPI/Flask, OpenAI API, spaCy, pdfplumber for the backend.

- The architecture includes components for file processing, AI analysis, authentication, and data storage using PostgreSQL and Firebase Firestore.

- Third-party services like OpenAI API and Firebase Authentication aid in resume analysis and real-time updates.

- Future phases will introduce advanced features like resume-to-job matching, AI-driven recommendations, and recruiter-friendly tools.

- Expected outcomes include improved efficiency in applicant filtering and personalized AI-driven resume enhancements for job seekers.

- The platform aims to become a fully automated hiring assistant, leveraging AI for smarter and faster recruitment processes.

Read Full Article

11 Likes

Medium

241

Image Credit: Medium

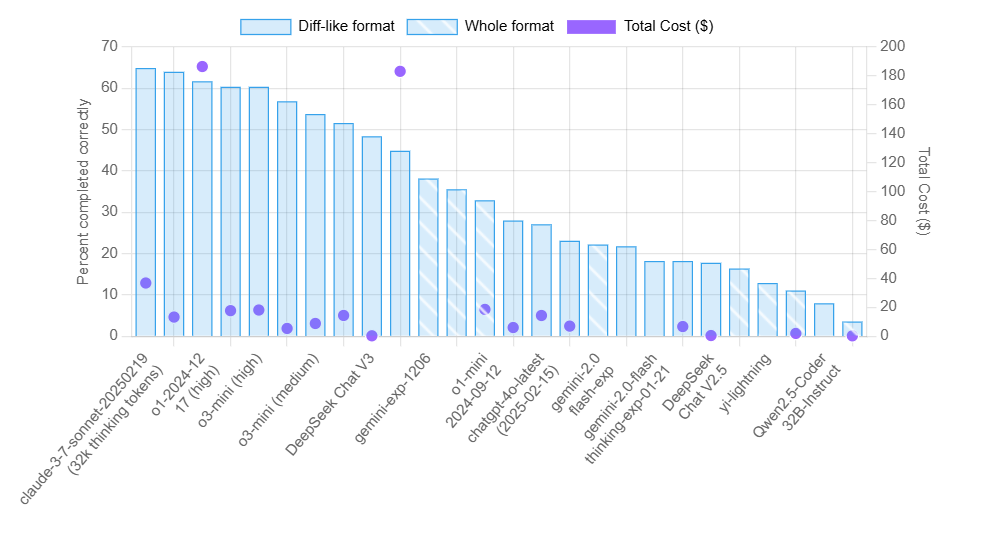

How we decide among the best Large Language models

- MMLU(EM) and its variants, MMLU-Redux and MMLU-Pro, assess language models across multiple subjects using thousands of questions, highlighting the models' world knowledge and problem-solving skills.

- The DROP dataset challenges models with discrete reasoning tasks over paragraphs, with '3-shot' guiding prompts and 'F1' metric measuring accuracy in reading comprehension.

- IF-Eval (Prompt Strict) evaluates the model's adherence to instructions, focusing on strict prompt compliance to test the model's ability to follow guidelines precisely.

- GPQA benchmark features difficult questions in science fields, emphasizing advanced reasoning, with 'Pass@1' metric measuring accuracy in the model's initial responses.

- SimpleQA tests factual accuracy in language models across various topics, utilizing the 'Correct' metric to measure the model's accuracy in providing correct answers.

- FRAMES benchmark evaluates RAG systems on complex, multi-hop questions, focusing on factual accuracy, retrieval effectiveness, and reasoning skills.

- LongBench v2 assesses large language models on in-depth understanding tasks, with models like OpenAI’s o1-preview exceeding human performance.

- HumanEval-Mul and LiveCodeBench evaluate LLMs in code generation tasks across different programming languages, emphasizing accuracy on the first attempt.

- Codeforces ranks users based on performance in competitive programming, indicating proficiency levels with assigned titles and color codes.

- SWE-bench Verified ensures practical coding challenge evaluation by validating tasks curated from GitHub repositories, enhancing benchmark reliability.

Read Full Article

14 Likes

Towards Data Science

395

Image Credit: Towards Data Science

Debugging the Dreaded NaN

- To implement a NaN capturing solution in PyTorch, one can use PyTorch Lightning's callback interface.

- A NaNCapture Lightning callback is created to handle NaN events during training.

- The callback stores corrupted models and halts training upon encountering NaN values.

- Reproducibility is ensured by including NaNCapture state in the checkpoints for debugging.

- Loading the stored training batch for debugging relies on Lightning's LightningDataModule.

- Testing the callback involves creating a problematic model to trigger NaN occurrences.

- Runtime performance is minimally impacted by the NaNCapture callback, providing valuable debug capabilities.

- Enhancements like capturing and restoring random states for reproducibility are also discussed.

- Encountering NaN failures in machine learning can be challenging and indicate model issues.

- The proposed approach using Lightning callback streamlines NaN error debugging.

- This solution can save developers significant time and effort in debugging NaN errors.

Read Full Article

23 Likes

Medium

398

Image Credit: Medium

Building a Bidirectional Code Converter: Pseudocode ↔ C++ Using Transformers and Streamlit

- Building a bidirectional code converter using Transformers and Streamlit.

- Two separate Transformer-based models were trained for conversion between pseudocode and C++.

- Custom tokenization strategy was designed to handle pseudocode constructs and C++ syntax.

- The models were deployed using Streamlit for user-friendly interaction.

Read Full Article

23 Likes

Medium

241

Image Credit: Medium

How Do We Measure AI Smarts? A Simple Guide to LLM Evaluation

- LLM evaluation consists of various benchmarks to measure different aspects of AI smarts.

- The benchmarks include HellaSwag for commonsense reasoning, HumanEval for coding skills, TruthfulQA for resistance to misinformation, BIG-bench for creative and diverse language tasks, CodeXGLUE for programming and code understanding, Chatbot Arena for conversational quality, MT Bench for complex conversational ability.

- The benchmarks assess knowledge across different subjects, logical reasoning, coding skills, resistance to misinformation, diverse language tasks, programming capabilities, conversational quality, and multi-turn dialogues.

- The evaluation aims to understand if AI models possess real-world knowledge, can apply everyday logic, understand and write code accurately, provide safe and accurate information, handle unexpected and creative language challenges, assist with programming tasks, engage in coherent conversations, and sustain meaningful dialogues.

Read Full Article

14 Likes

Unite

403

Image Credit: Unite

Breaking Down Nvidia’s Project Digits: The Personal AI Supercomputer for Developers

- Nvidia's Project Digits is a personal AI supercomputer for developers offering advanced GPU technology and optimized AI software for faster model training and efficiency.

- The system runs on the GB10 Grace Blackwell Superchip, providing up to 1 petaflop of AI performance and supporting models with up to 200 billion parameters.

- It includes 128GB of unified memory and up to 4TB of NVMe storage, with NVLink-C2C interconnect for efficient data transfer in computer vision and NLP tasks.

- Project Digits comes preloaded with AI frameworks like TensorFlow, PyTorch, CUDA, NeMo, RAPIDS, and Jupyter notebooks, enabling local model training with scalability to cloud or data center environments.

- It is compact, energy-efficient, and priced at $3,000, making high-end AI computing accessible for individual developers and small teams.

- Project Digits accelerates AI training, reduces costs compared to cloud services, and streamlines development workflows with preinstalled AI tools.

- The system can be used by medical professionals for faster disease detection, autonomous vehicle developers for improved navigation, and companies developing chatbots and virtual assistants for better interactions.

- Project Digits offers more control than cloud-based platforms, easier setup than traditional on-premise systems, scalability without complex hardware expansion, and high performance without bottlenecks.

- With a one-time cost starting at $3,000, Project Digits provides cost-effective AI computing for developers, making AI development faster, more affordable, and accessible.

- Overall, Project Digits empowers developers to work on AI projects without the limitations of cloud services or the complexities of traditional on-premise systems, enhancing speed, efficiency, and affordability in AI development.

Read Full Article

24 Likes

For uninterrupted reading, download the app